In the future, we will be able to use such perfectly crafted virtual reality systems to be nigh indistinguishable from reality. Environments that aren’t there, but you can see and feel them. Although we are not quite there yet for ourselves, we are there in the case of our computers. Virtualization technology creates this possibility for our computers. This has various applications and working principles, and we will try to explain them to you in a little more detail.

Virtual Machine & its Need

Introduction

Running a whole operating system traditionally requires a set of essential hardware, all at the disposal of the operating system. To run multiple OSs, what could also be done is multiple booting, but in that case, you can not run two operating systems at the same time. Virtual Machines have provided us the possibility of using more than one operating system simultaneously on the same set of hardware.

In the case of a virtual machine, there are some obvious points that we can make. Just like we began this article, it is kind of a VR for operating systems. The VMs that we create make use of “virtual” hardware. The hardware that the hosted OS uses is as real as any other when it comes to the understanding of that OS itself, but the OS is only made to look at it that way. The RAM, storage and processor power used by the OS are the utilization of only fractions of the real hardware. All of this virtualization and management is done by something called the hypervisor.

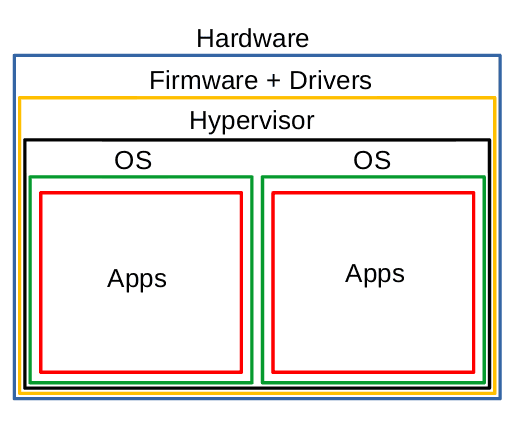

Hypervisor

A hypervisor is firmware, software, or hardware that is the center component of a VM. Let us clear up a little terminology here: the system on which the VMs are being installed is called the host system, and the machines installed on the VMs are called the guest systems. The hypervisor is the layer that manages all the resources between the VMs and the actual hardware of the system (or the OS that hosts the hypervisor). Even though the OSs are being run on virtual hardware, it is the job of the hypervisor to make it seem like that the OS has access to the real hardware.

Hypervisors provide a stable impregnable border between the different OSs being run as VMs. The hypervisor simulates the hardware components for the VM, which are configured by the user. The hardware that VMs utilize (through hypervisors) are fractions of the actual hardware of the system. Thus, one cannot exceed the real hardware limits. For example, if you have 16 GB RAM, you can split that as 8 GB between two VMs.

The critical point is that the technology that makes VMs possible: hypervisors; does not require any special hardware. It is just an essential software component. There are two significant kinds of hypervisors:

Type 2: Hosted Hypervisors

I am aware that I am demonstrating type 2 before 1, but there is a sequence. The hosted hypervisors stay at the application level. This might be familiar to you if you have ever used Oracle VM VirtualBox, VMWare, or GNOME Boxes.

This is an application that allows you to install an OS as a virtual machine inside of your OS (the OS in which the application itself is installed). This is significantly easy to set up and use. All you have to do is install an application that allows you to create VMs, and get an image of the required OS. You can directly specify how much RAM, hard drive space, etc. you would like to allow for the VM to use.

There are significant positives for using a hosted hypervisor, especially for regular users like us. There is, however, a problem. The usual structure of a computer system follows this sequence:

- Physical hardware

- Firmware

- Drivers

- Operating System

- Applications

Getting into the technicalities a little bit, the software we use on a computer system has different “privileges.” For example, if you permit just any software access to configuring your processor’s performance, it can go ahead and mess your whole system up easily. This is a bad security practice. In reality, what happens is that the kernel of an OS gets to interact with the hardware. If any app requires access to any hardware component, it can send a request to the kernel, and the kernel will provide an appropriate response. These requests are called system calls or syscalls.

Now we take the case of a VM on a hosted hypervisor. For example, you run an application on the guest OS. This will send a syscall to the kernel of the guest OS. This, in turn, will be interpreted and converted to another syscall by the hypervisor, which will now send that syscall to the kernel of the host OS (because remember, the hosted hypervisor is just another application for the host OS). The kernel of the host OS will send the response to the hypervisor, which will now need to be converted into the appropriate response for the application in the guest OS. Phew.

All this means that hosted hypervisors have to go through quite a long process. On most modern hardware, it doesn’t take as long as it seems but is not like native speed and performance. The solution to this is type 1 hypervisor.

Hosted Hypervisor

Type 1: Bare Metal Hypervisor

Straight to the point, the bare metal hypervisor sits on top of the firmware/driver layer. This means that it can interact with the hardware directly, just as an OS. All of the required OSs will be installed on top of the bare metal hypervisor, and the applications on top of that. This adds several advantages. All the OSs installed on the hypervisor run very well, almost as native OSs, with minimal lag or stuttering. If the hardware that the hypervisor is being installed on is powerful (as usually is the case with gaming computers or servers), it will be able to manage multiple OSs quite easily.

Bare Metal Hypervisor

Some common examples of bare metal hypervisors include VMWare ESXi, Microsoft Hyper-V, Citrix XenServer, Xen, Linux KVM, etc.

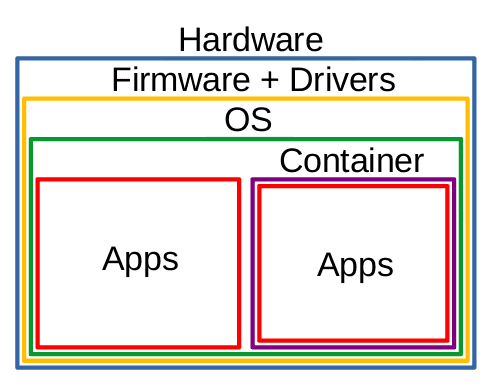

Containers

Containers are somewhat similar to VMs, but there is quite a bit of difference. As we have seen in the case of hosted hypervisors, VMs are used to install a whole OS, and then applications are installed and used on top of those OSs. A container, on the other hand, packages up the code of an application, its dependencies, tools, libraries, runtimes, and all other required things and runs just that application in a virtual environment.

Container

The image will make the hierarchy more clear. Notice that the container is installed on top of OS, and then applications are run directly inside the container. There is no OS inside the container, as is the case with VMs.

Uses

So, we have delved into the details of the working principles of VMs already. It is time to get to know how it can be useful in real-life scenarios.

Multiple Workstations from Single System

The first point and the primary selling point of VMs is the fact that you can use multiple operating systems, separated from each other, at the same time, from the same machine. This opens up incredible possibilities. For example, if you need two workstations at the same place, you can buy one powerful system that is capable of running two separate systems at the same time. This will prove to be indeed very efficient.

This has widespread usage as well. If you require an application that runs on any OS that you don’t use, you don’t have to install the operating system on your computer. You can install a hosted hypervisor software on your OS and install the supported OS. It is much easier to deal with and gets the job done.

Maximum Utilization

The maximum utilization of the resources is the reason why virtualization is very popular for servers. A server is a very, very powerful computer. It is difficult for a single OS actually to utilize the hardware’s resources completely. Solution? Install a bare-metal hypervisor and run multiple operating systems that together use the hardware in their entirety.

Thus, VMs avail maximum utilization of the resources. But it is not only the servers we are talking about. For example, if you have a powerful gaming computer, you can instead use its hardware entirely by say, using one OS as your primary workstation and one as a NAS. Or maybe a more significant number of OSs and tasks.

Power Efficiency

Since you can now run two systems using one machine instead of two separate machines for two different systems, you save a lot of electricity and power. It is good for your electricity bill; it is also undoubtedly good for the environment.

Physical Space/ Mobility

You can use one machine for multiple systems instead of various devices, so you are now naturally saving a lot of physical space. This means that if you get one very powerful machine, you can satisfy the requirements of multiple ones, so if you have to move your infrastructure from one place to another, you will now have to move lesser physical hardware than you would otherwise traditionally have to.

Recovery

This is a handy feature. VMs have a property of taking ‘snapshots’. Since the whole system is virtual, VMs make copies of their properties, settings, and data at certain intervals of time. So if your system gets messed up or corrupted at some point in time, you can revert to one of the stable states, and there will be not much harm done.

Testing Area

A VM (in fact, also a container) is often used as a testing ground. Any problems that you might create in a virtual setup cannot harm the real hardware, and so, it makes that an ideal place for the testing of the new software (especially firmware). Developers often use VMs to check compatibility with different OSs as well.

Conclusion

Virtual machines have provided us with many improvements over our old methods. We can now run systems in a smaller space, more efficiently, and more safely. They have become an easy solution for using software that is not natively supported by your OS. VMs have become a haven for testing purposes—all in all, great for personal, professional, and environmental causes.

We hope that you found that article informative and helpful.